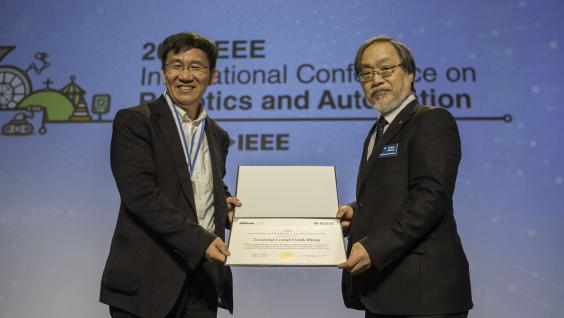

ECE Faculty, Students and Alumni Recognized with Three Awards at the 2019 IEEE International Conference on Robotics and Automation

ECE swept three prestigious awards at the 2019 IEEE International Conference on Robotics and Automation (ICRA), which is the flagship conference of the IEEE Robotics and Automation Society. The excellent robotics work of our faculty members, students and alumni has been highly recognized.

The three awards include the 2019 IEEE Robotics and Automation Award, 2019 IEEE ICRA Best Paper Award in Robot Manipulation and the Honorable Mention in IEEE Transactions on Robotics King-Sun Fu Memorial Best Paper Award.

The award ceremony was held on 22 May 2019 in Montreal, Canada.

About the Awards

The 2019 IEEE Robotics and Automation Award

awarded by Prof. Zexiang LI and our alumnus & DJI founder Frank WANG with the citation “For contributions to the development and commercialization of civilian drones, aerial imaging technology”.

2019 IEEE ICRA Best Paper Award in Robot Manipulation

awarded by our postgraduate student KIM Chung Hee and his supervisor Prof. Jungwon SEO for the paper entitled "Shallow-Depth Insertion: Peg in Shallow Hole through Robotic In-Hand Manipulation",

Paper: "Shallow-Depth Insertion: Peg in Shallow Hole Through Robotic In-Hand Manipulation”

"The video is complement to the paper entitled "Shallow-Depth Insertion: Peg in Shallow Hole through Robotic In-Hand Manipulation" and demonstrates: -3D-printed card assembly -Picture frame assembly-Mobile phone battery insertion -Lego block mating -Container lid assembly -Dry cell battery insertion -In addition, shallow-depth insertion has been performed vertically to validate the stability of the technique."

Honorable Mention in IEEE Transactions on Robotics King-Sun Fu Memorial Best Paper Award

awarded by Prof. Shaojie SHEN and his postgraduate students Tong QIN and Peiliang LI for the paper entitled "VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator” published in the IEEE Transactions on Robotics, volume 34, number 4, pages 1004-1020, August 2018

Abstract of the Paper

One camera and one low-cost inertial measurement unit (IMU) form a monocular visual-inertial system (VINS), which is the minimum sensor suite (in size, weight, and power) for the metric six degrees-of-freedom (DOF) state estimation. In this paper, we present VINS-Mono: a robust and versatile monocular visual-inertial state estimator. Our approach starts with a robust procedure for estimator initialization. A tightly coupled, nonlinear optimization-based method is used to obtain highly accurate visual-inertial odometry by fusing preintegrated IMU measurements and feature observations. A loop detection module, in combination with our tightly coupled formulation, enables relocalization with minimum computation. We additionally perform 4-DOF pose graph optimization to enforce the global consistency. Furthermore, the proposed system can reuse a map by saving and loading it in an efficient way. The current and previous maps can be merged together by the global pose graph optimization. We validate the performance of our system on public datasets and real-world experiments and compare against other state-of-the-art algorithms. We also perform an onboard closed-loop autonomous flight on the microaerial-vehicle platform and port the algorithm to an iOS-based demonstration. We highlight that the proposed work is a reliable, complete, and versatile system that is applicable for different applications that require high accuracy in localization. We open source our implementations for both PCs (https://github.com/HKUST-Aerial-Robotics/VINS-Mono) and iOS mobile devices (https://github.com/HKUST-Aerial-Robotics/VINS-Mobile).

Related Links

The 2019 IEEE Robotics and Automation Award

2019 IEEE ICRA Best Paper Award in Robot Manipulation

Honorable Mention in IEEE Transactions on Robotics King-Sun Fu Memorial Best Paper Award